Live migration of virtual machines

Go Live

Libvirt Instances

The next obstacle en route to live migration is to introduce the Libvirt instances to one other on the nodes. For a live migration between nodes A and B to work, the Libvirt instances need to cooperate with one other. Specifically, this means that each Libvirt instance needs to open a TCP/IP socket. All you need are the following lines in /etc/libvirt/libvirtd.conf:

listen_tls = 0 listen_tcp = 1 auth_tcp = "none"

The configuration shown does not use any kind of authentication between cluster nodes, so you should exercise caution. Instead of TCP, you could set up SSL communication with the appropriate certificates. Such a configuration would be much more complicated, and I will not describe it in this article.

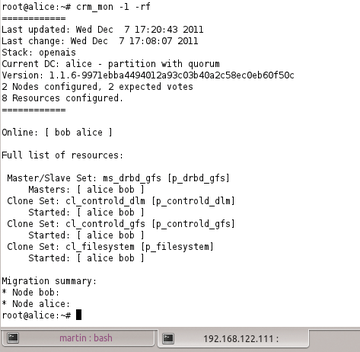

In addition to the changes in libvirtd.conf, administrators also need to ensure that Libvirt is launched with the -l parameter. On Ubuntu, you just need to add the required parameter to the libvirtd_opts entry in /etc/default/libvirt-bin then restart Libvirt. At this point, there is nothing to prevent live migration, at least at the Libvirt level (Figure 3).

Figure 3: Given the right configuration parameters, Libvirt listens on a port for incoming connections from other Libvirt instances on the network.

Figure 3: Given the right configuration parameters, Libvirt listens on a port for incoming connections from other Libvirt instances on the network.

Live Migration with Pacemaker

For Pacemaker to control the live migration effectively, you still need to make a small change in the configuration that relates to the virtual machine definitions stored in Pacemaker. Left to its own devices, the Pacemaker agent that takes care of virtual machines would by default stop any VM that you try to migrate and then restart it on the target host.

To avoid this, the agent needs to learn that live migration is allowed. As an example, the following code shows an original configuration:

primitive p_vm-vm1 ocf:heartbeat:VirtualDomain params \ config="/etc/libvirt/qemu/vm1.cfg"op \ start interval="0" timeout="60" \ op stop interval="0" timeout="300" \ op monitor interval="60" timeout="60"start-delay="0"

To enable live migration, the configuration would need to be modified as follows:

primitive p_vm-vm1 ocf:heartbeat:VirtualDomain params \ config="/etc/libvirt/qemu/vm1.cfg" migration_transport="tcp" \ op start interval="0" timeout="60" op stop interval="0" timeout="300" \ op monitor interval="60" timeout="60" start-delay="0" \ meta allow-migrate="true" target-role="Started"

The additional parameters and the new meta attribute are particularly important. Given this configuration, the command

crm resource move p_vm-vm1

would migrate the virtual machine on the fly.

iSCSI SANs

If you need to implement live migration on the basis of a SAN with an iSCSI connection, you face a much more difficult task, mainly because SAN storage is not really designed to understand concurrent access to specific LUNs from several nodes. Therefore, you need to add an intermediate layer and retrofit this ability.

Most admins do this with the cluster version of LVM (CLVM) or a typical cluster filesystem (GFS2 or OCFS2). In practical terms, the setup is as shown in Figure 4: On each node of the cluster, the iSCSI LUN is registered as a local device; an instance of CLVM or OCFS/GFS2 takes care of coordinated access. Also, this requires that Libvirt itself provides reliable locking.

Setups like the one I just mentioned are thus only useful if Libvirt uses the sanlock [8] tool at the same time. This is the only way to prevent the same VM running on more than one server at any given time, which would also be fatal to the consistency of the filesystem within the VM itself.

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.