« Previous 1 2 3 4 Next »

Cloud-native storage for Kubernetes with Rook

Memory

Control by Kubernetes

Rook describes itself as cloud-native storage mainly because it comes with the API mentioned earlier – an API that advertises itself to the running containers as a Kubernetes service. In contrast to handmade setups, wherein the admin works around Kubernetes and pushes Ceph to containers, Rook provides the containers with all of Kubernetes' features in terms of volumes. For example, if a container requests a block device, it looks like a normal volume in the container but points to Ceph in the background.

Rook changes the configuration of the running Ceph cluster automatically, if necessary, without the admin or a running container having to do anything. Storing a specific configuration is also directly possible through the Rook API.

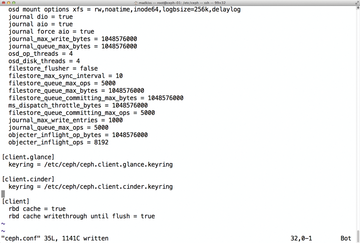

This capability has advantages and disadvantages. The developers expressly point out that one of their goals was to keep the administrator as far removed from running the Ceph cluster as possible. In fact, Ceph offers a large number of configuration options that Rook simply does not map (Figure 3), so they cannot be controlled with the Rook API.

Figure 3: Ceph offers almost infinite tuning possibilities, as shown here in ceph.conf, but Rook does not abstract them all.

Figure 3: Ceph offers almost infinite tuning possibilities, as shown here in ceph.conf, but Rook does not abstract them all.

Changing the Controlled Replication Under Scalable Hashing (CRUSH) map is a good example. CRUSH is the algorithm that Ceph uses to distribute data from clients to existing OSDs. Like the MON map and the OSD map, it is an internal database that the admin can modify, which is useful if you have disks of different sizes in your setup or if you want to distribute data across multiple fire zones in a cluster. Rook, however, does not map the manipulation of the CRUSH map, so corresponding API calls are missing.

In the background, Rook automatically ensures that the users notice any problems as little as possible. For example, if a node on which OSD pods are running fails, Rook ensures that a corresponding OSD pod is started on another cluster node after a wait time configured in Ceph. Rook uses Ceph's self-healing capabilities: If an OSD fails, meaning that the required number of replicas no longer exists for all binary objects in storage, Ceph automatically copies the affected objects to other OSDs. The customer's application does not notice this process; it just keeps on accessing its volume as usual.

However, a certain risk cannot be denied with this approach. Ceph is highly complex and completely independent of whether an admin wants to experiment with CRUSH or other cluster parameters. Familiarity with the technology is necessary, as well, because for now, when the cluster behaves unexpectedly or a problem crops up that needs debugging, even the most amazing abstraction by Rook will be of no use. If you do not know what you are doing, the worst case scenario is the loss of data in the Ceph cluster.

At least the Rook developers are aware of this problem, and they are working on making various Ceph functions accessible through the Rook APIs in the future – explicitly, CRUSH.

Monitoring, Alerting, Trending

Even if Ceph, set up and operated by Rook, is largely self-managed, you will at least want to know what is currently happening in Ceph. Out of the box, Ceph comes with a variety of interfaces for exporting metric data about the current usage and state of the cluster. Rook configures these interfaces so that they can be used from the outside.

Of course, you can find ready-made Kubernetes templates online by Prometheus, also one of the hip cloud-ready apps that launches a complete monitoring, alerting, and trending (MAT) cluster in a matter of seconds [7]. The Rook developers, in turn, offer matching pods that wire the Rook Ceph to the Prometheus container. Detailed instructions can be found on the Rook website [8].

Ultimately, what you get is a complete MAT solution, including colorful dashboards in Grafana (Figure 4) that tell you how your Ceph cluster is getting on in real time.

Looking to the Future

By the way, Rook is not designed to work exclusively with Ceph, even if you might have gained this impression in the course of reading this article. Instead, the developers have big plans: CockroachDB [9], for example, is a redundant, high-performance SQL database that is also on the Rook to-do list, as is Minio [10], a kind of competitor to Ceph. Be warned against building production systems with these storage solutions, though. Both drivers are still in the full sway of development. Like Rook itself, Ceph is also tagged beta. However, the combination of Ceph and Rook is by far the most widespread, so large-scale teething pain is not to be expected.

By the way, Rook is backed by Upbound [11], a cloud-native Kubernetes provider. Although the list of maintainers does not contain many well-known names, a Red Hat employee is listed in addition to two people from Upbound, so you probably don't have to worry about the future of the project.

Of course, Red Hat itself is interested in creating the best possible connection between Kubernetes and Ceph, because Ceph is also marketed commercially as a replacement for conventional storage. Rook very conveniently came along as free software under the terms of Apache license v2.0.

« Previous 1 2 3 4 Next »

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.