« Previous 1 2 3 4 Next »

Scalable network infrastructure in Layer 3 with BGP

Growth Spurt

Large-scale virtualization environments have ousted typical small setups. Whereas a company previously purchased a few physical servers to deploy an application, today, the entire workload of a new setup ends up on virtual machines running on a cloud service provider's platform.

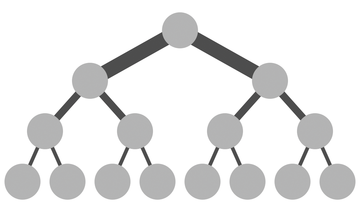

A physical layout often is based on a tree structure (Figure 1), with the admin connecting all the servers to one or two central switches and adding more switches if the number of ports on a switch is not sufficient. Together, the switches and network adapters form a large physical segment in OSI Layer 2.

Figure 1: Classic network architectures that follow a tree structure are not suitable for scale-out platforms.

Figure 1: Classic network architectures that follow a tree structure are not suitable for scale-out platforms.

In this article, I describe how you can build an almost arbitrarily scalable network for your environments with Layer 3 tools. As long as two hosts have any kind of physical communication path, communication on Layer 3 works, even if the hosts in question reside in different Layer 2 segments. The Border Gateway Protocol (BGP) makes this possible by providing a way to let each server know how to reach other servers; "IP fabric" describes data center interconnectivity via IP connections.

...Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.