Link aggregation with kernel bonding and the Team daemon

Battle of Generations

No matter how many network interfaces a server has and how the admin combines them, hardware alone is not enough. To turn multiple network cards into a true failover solution or a fat data provider, the host's operating system must also know that the respective devices work in tandem. If you are confronted with this problem on Linux, you usually use the bonding driver in the kernel; however, an alternative is libteam in user space, which implements many other functions and features, as well.

A Need for NICs

Many people who see a data center from the inside for the first time are surprised by the large number of LAN ports on an average server (Figure 1): Virtually no server can manage with just one network interface, for several reasons.

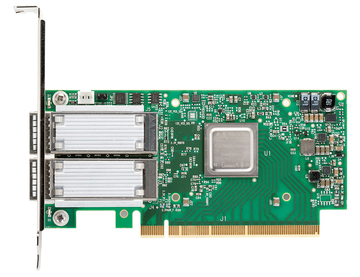

Figure 1: Network cards with multiple ports for redundancy or performance purposes are the rule rather than the exception in data centers.

Figure 1: Network cards with multiple ports for redundancy or performance purposes are the rule rather than the exception in data centers.

- The owner might use several network interfaces to increase redundancy. In such a setup, the server is connected to separate switches by different network ports, so that the failure of one network device does not take down the server.

- The admin might want to

...

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Focus On Self-Hosting

• Self-Hosted PaaS with Coolify

• Build and Host Docker Images

• Self-Hosted Pritunl VPN Server with MFA

• Self-Hosted Chat Servers

• Self-Hosted Remote Support with RustDesk

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.