Zuul 3, a modern solution for CI/CD

Continuous Progress

Zuul 3 brings a new approach to the DevOps challenge. (For more on these important DevOps concepts, see the "CI and CD" box.) Whereas other CI/CD tools like Jenkins, Bamboo, or TeamCity focus on constant building and testing, Zuul's main design goal is to be a gating mechanism. In other words, as soon as a proposed change is verified and approved by a human, it can undergo final tests and be deployed automatically to the production environment. Such a system requires a lot of computing power, but Zuul has been proven reliable and highly scalable by its designers: the OpenStack community.

CI and CD

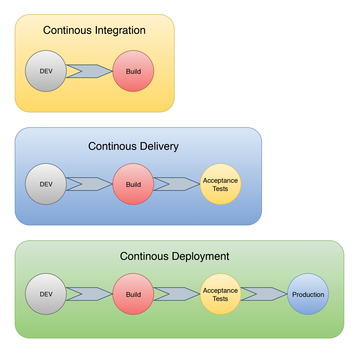

CI and CD are terms that pop up frequently in discussions of modern software development (Figure 1). CI stands for continuous integration , a practice that focuses on making releases easier to prepare. CD can mean either continuous delivery or continuous deployment , and although these practices have a lot in common, they also have significant differences [1].

...

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.