« Previous 1 2 3 4 Next »

Opportunities and risks: Containers for DevOps

Logistics

Like the term "cloud," the phrase "DevOps" is used so widely these days that people can no longer agree on a single definition. A common definition states that DevOps is a cooperation between developers and operations professionals that results in the optimization of IT and development processes and automatically leads to a better coordinated delivery of software and appropriate infrastructure. Most definitions no longer lay down specific requirements regarding the tools or programs used.

Containers have become widely accepted, and the meteoric rise of Docker has fixed the technology in the minds of developers, administrators, and planners alike. On the one hand, containers quickly provide developers a clean environment in which to experiment freely. On the other hand, containers significantly reduce the overhead required for deployment. You would forgiven if you think a container is the technical implementation of the DevOps principle.

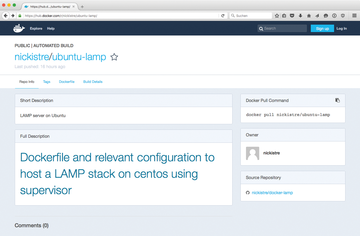

However, anyone dealing with containers could be taking a big risk in everyday operations, because a black box (Figure 1) in the form of a container is a frightening scenario for administrators.

...Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.