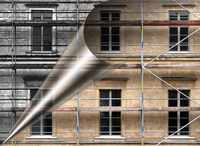

Lead Image © Max, fotolia.com

Ceph object store innovations

Renovation

When Red Hat took over Inktank Storage in 2014, thus gobbling up the Ceph [1] object store, a murmur was heard throughout the community: on the one hand, because Red Hat already had a direct competitor to Ceph in its portfolio in the form of GlusterFS [2], and on the other because Inktank was a such a young company – for which Red Hat had laid out a large sum of cash. Nearly two years later, it is clear that GlusterFS plays only a minor role at Red Hat and that the company instead is relying increasingly on Ceph.

In the meantime, Red Hat has further developed Ceph, and many of the earlier teething problems have been resolved. In addition to bug fixing, developers and admins alike were looking for new features: Many admins find it difficult to make friends with Calamari, the Ceph GUI promoted by Red Hat; they need alternatives. The CephFS filesystem, which is the nucleus of Ceph, has been hovering in beta for two years and needs finally to be approved for production use. Moreover, Ceph repeatedly earned criticism for its performance, failing to compete with established storage solutions in terms of latency.

Red Hat thus had more than enough room for improvement, and much has happened in recent months. A good reason to take a closer look at Ceph is to determine whether the new functions offer genuine benefits for admins in everyday life and see what the new GUI has to offer.

CephFS: A Touchy Topic

The situation seems paradoxical: Ceph started life more than 10 years ago as a network filesystem. Sage Weil, the inventor of the solution, was looking into distributed filesystems at the time in the scope of his PhD thesis. It was his goal to create a better Lustre filesystem that got rid of Luster's problems. However, because Weil made the Ceph project his main occupation and was

...Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.