Professional virtualization with RHV4

Polished

Cockpit for the Manager

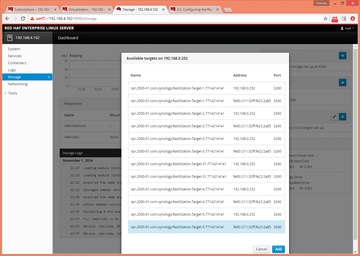

The Cockpit interface installed by default on the RHVH nodes is an interesting option for the Machine Manager, which you can use to set up additional storage devices such as iSCSI targets or additional network devices such as bridges, bonds, or VLANs. In Figure 1, I gave the Manager an extra disk in the form of an iSCSI logical unit number (LUN).

To install the Cockpit web interface, you just need to include two repos – rhel-7-server-extras-rpms and rhel-7-server-optional-rpms – and then install the software by typing yum install cockpit. Cockpit requires port 9090, which is opened as follows, unless you want to disable firewalld.

$ firewall-cmd --add-port=9090/tcp $ firewall-cmd --permanent --add-port=9090/tcp

Now log in to the Cockpit web interface as root.

Setting Up oVirt Engine

To install and configure the oVirt Engine, enter:

$ yum install ovirt-engine-setup $ engine-setup

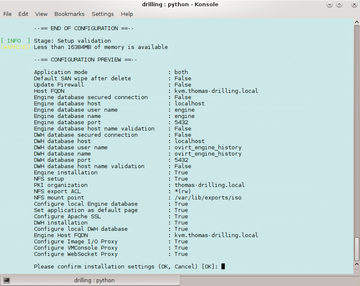

The engine-setup command takes you to a comprehensive command-line interface (CLI)-based wizard that guides you through the configuration of the virtualization environment and should be no problem for experienced Red Hat, vSphere, or Hyper-V admins, because the wizard suggests functional and practical defaults (in parentheses). Nevertheless, some steps differ from the previous version. For example, step 3 lets you set up an Image I/O Proxy

and expands the Manager with a convenient option for uploading VM disk images to the desired storage domains.

Subsequently setting up a WebSocket proxy server is also recommended if you want your users to connect with their VMs via the noVNC or HTML5 console. The optional setup of a VMConsole Proxy

requires further configuration on client machines. In step 12 Application Mode

, you can decide between Virt

, Gluster

, and Both

, where the latter offers the greatest possible flexibility. Virt

application mode only allows the operation of VMs in this

environment, whereas Gluster application mode also lets you manage GlusterFS via the administrator portal. The public key infrastructure (PKI) configuration wizard continues after the engine configuration and guides you through the setup of an NFS-based ISO domain under /var/lib/export/iso on the Machine Manager.

After displaying the inevitable summary page (Figure 2), the oVirt Engine setup completes; after a short while, you will be able to access the Manager splash page at https://rhvm-machine/ovirt-engine/ using the account name admin and a password, which you hopefully configured in the setup. From here, the other portals, such as the admin portal, the user portal, and the documentation, are accessible.

You now need to configure a working DNS, because access to all portals relies on the fully qualified domain name (FQDN). Because a "default" data center object exists, the next step is to set up the required network and storage domains, add RHVH nodes, and, if necessary, build failover clusters.

Host, Storage, and Network Setup

For the small nested setup in the example here, I first used iSCSI and NFS as shared storage; however, later I will look at how to access Gluster or Ceph storage. For now, it makes sense to set up two shared storage repositories based on iSCSI disks or NFS shares. A 100GB LUN can accommodate, for example, the VM Manager if you are using the Hosted Engine setup described below. Because I am hosting the VM Manager on ESXi, I avoided a doubly-nested VM, even if it is technically possible. As master storage for the VMs, I opted for a 500GB thin-provisioned iSCSI LUN.

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.