Lead Image © Steve Everts, 123RF.com

NFS and CIFS shares for VMs with OpenStack Manila

Separate Silos

Those involved with OpenStack quickly get the impression that the subject of cloud storage has finally been solved, because at a key point in the setup, OpenStack Cinder makes sure virtual machines (VMs)are provided with storage in the form of persistent block devices (Figure 1). However, you will quickly notice that Cinder still has shortcomings – namely, at the point which persistent, shared storage, rather than just persistent storage, is requested. NFS and CIFS implement this shared storage in classic computing environments, but Cinder has no solution whatsoever for this use case, leaving you out in the cold.

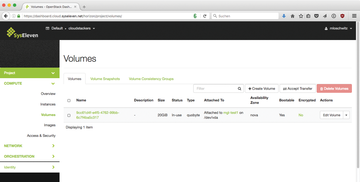

Figure 1: Cinder might take care of storage in OpenStack, but it only handles persistent block storage.

Figure 1: Cinder might take care of storage in OpenStack, but it only handles persistent block storage.

Yet, just as in classic setups, a cloud environment has the need for shared storage, so cloud customers also should be able to access shared memory from several VMs – and even beyond the boundaries of their own project. One of OpenStack's biggest strengths is its modularity, so it is no wonder that a component has been created that addresses the issue of shared memory: OpenStack Manila [1]. The aim of this module is to make it possible for VMs to use NFS and CIFS in OpenStack (

...

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Self-Hosting

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.