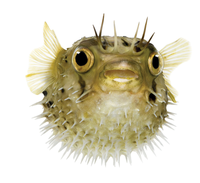

Lead Image © Eric Issel, 123RF.com

Managing Bufferbloat

All Puffed Up

Data sent on a journey across the Internet often takes different amounts of time to travel the same distance. This delay time, which a packet experiences on the network, comprises:

- transmission delay, the time required to send the packet over the communication links;

- processing delay, the time each network element spends processing the packet; and

- queue delay, the time spent waiting for processing or transmission.

The data paths between communicating endpoints typically consist of many hops with links of different speeds. The lowest bandwidth along the path represents the bottleneck, because the packets cannot reach their destination faster than the time required to transmit a packet at the bottleneck data rate.

In practice, the delay time along the path – the time from the beginning of the transmission of a packet by the sender to the reception of the packet at the destination by the receiver – can be far longer than the time needed to transmit the packet at the bottleneck data rate. To ensure a constant packet flow at maximum speed, you need a sufficient number of packets in transmission to fill the path between the sender and the destination.

Buffers temporarily store the packets in a communication link while it is in use, which requires a corresponding amount of memory in the connecting component. However, the Internet has a design flaw known as bufferbloat that is caused by the incorrect use of data buffers.

TCP/IP Data Throughput

System throughput is the data rate at which the number of packets transmitted from the network to the destination is equal to the number of packets transmitted into the network. If the number of packets in transmission increases, the throughput increases until the packets are sent and received at the bottleneck data rate. If more packets are transmitted, the receive rate will not increase. If the

...

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Focus On Self-Hosting

• Self-Hosted PaaS with Coolify

• Build and Host Docker Images

• Self-Hosted Pritunl VPN Server with MFA

• Self-Hosted Chat Servers

• Self-Hosted Remote Support with RustDesk

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.