« Previous 1 2 3 4 Next »

Ceph object store innovations

Renovation

Latency Issues in Focus

The second significant Ceph change concerns the object store itself: The developers have introduced a new storage back end for the storage cluster's data tanks, the OSD. It will make Ceph much faster, especially for small write operations.

However, if you want Ceph to use a block device, you still need a filesystem on the hard disk or SSD. After all, Ceph stores objects on the OSDs using a special naming scheme but does not handle the management of the block device itself thus far. The filesystem is thus necessary for the respective ceph-osd daemon to be able to use a disk at all.

In the course of its history, Ceph has recommended several filesystems for the task. Initially, the developers expected that Btrfs would soon be ready for production use. They even designed many features for Ceph explicitly with a view to using Btrfs. When it became clear that it would take a while for Btrfs to reach the production stage, Inktank changed its own recommendation: Instead of Btrfs, it was assumed that XFS would work best on Ceph OSDs (Figure 2).

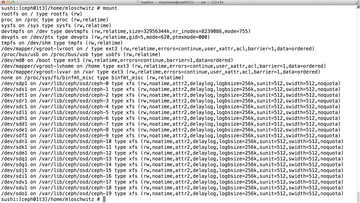

Figure 2: XFS is the Ceph developers' current recommendation for OSDs. However, BlueStore is likely to be the choice in the future.

Figure 2: XFS is the Ceph developers' current recommendation for OSDs. However, BlueStore is likely to be the choice in the future.

Either way, Weil realized at a fairly early stage that a POSIX-compliant filesystem as the basis for OSDs is a bad idea, because most guarantees that POSIX offers for filesystems are virtually meaningless for an object store, such as parallel access to individual files, which does not occur in the Ceph cluster because an OSD is only ever managed by one OSD daemon, and the daemon takes care of requests in sequential order.

The fact that CephFS does not need most POSIX functions is of little interest to a Btrfs or XFS filesystem residing on an OSD, though. Some overhead thus arises when writing to OSDs in Ceph because filesystems spend time ensuring POSIX compliance in the background.

In terms of throughput, that is indeed negligible, but superfluous checks of this type do hurt, especially in terms of latency. Accordingly, many admins throw their hands up in horror if asked to run a VM, say, with MySQL on a Ceph RBD (RADOS block device) volume.

A comparison with quick fixes like Fusion-io is ruled out by default, but Ceph even spectacularly exceeds Ethernet latency.

BlueStore to the Rescue

The Ceph developers' approach permanently eliminates the problem of POSIX filesystems with BlueStore. The approach comprises several parts: BlueFS is a rudimentary filesystem that has just enough features to operate a disk as an OSD. The second part is a RocksDB key-value store, in which OSDs store the required information.

Because the POSIX layer is eliminated, BlueStore should be vastly superior to its colleagues XFS and Btrfs, especially for small write operations. The Ceph developers substantiate this claim with meaningful performance statistics, showing that BlueStore offers clear advantages in a direct comparison.

BlueStore should already be usable as the OSD back end in the Jewel version. However, the function is still tagged "experimental" in Jewel [4] – the developers thus advise against production use. Never mind: The mere fact that the developers understand the latency problem and already started eliminating it will be enough to make many admins happy. In addition to BlueStore, a number of further changes in Jewel should have a positive effect on both throughput and latency, all told.

The Eternal Stepchild: Calamari

The established storage vendors are successful because they deliver their storage systems with simple GUIs that offer easy management options. Because an object store with dozens of nodes is no less complex than typical SAN storage, Ceph admins would usually expect a similarly convenient graphical interface.

Inktank itself responded several years ago to constantly recurring requests for a GUI and tossed Calamari onto the market. Originally, Calamari was a proprietary add-on to Ceph support contracts, but shortly after the acquisition of Inktank, Red Hat converted Calamari into an open source product and invited the community to participate in the development – with moderate success: Calamari meets with skepticism from both admins and developers for several reasons.

Calamari does not see itself only as a GUI, but as a complete monitoring tool for Ceph cluster performance. For example, it raises the alarm when OSDs fail in a Ceph cluster, and that is precisely one good reason that Calamari is not a resounding success thus far: It is quite complicated to persuade Calamari to cooperate at all. For example, the software relies on SaltStack, which it wants to set up itself. If you already have a configuration management tool, you cannot meaningfully integrate Calamari with it and are forced to run two automation tools at the same time.

In terms of functionality, Calamari is not without fault. The program offers visual interfaces for all major Ceph operations – for example, the CRUSH map that controls the placement of individual objects in Ceph is configurable via Calamari – but the Calamari interface is less than intuitive and requires much prior knowledge if you expect a working Ceph cluster in the end.

« Previous 1 2 3 4 Next »

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.