« Previous 1 2 3 Next »

Operating large language models in-house

At Home

Importing Open WebUI

For friendlier user interactions – and for user login in particular – you will want to install Open WebUI on your server. The web interface is best run as a Docker installation directly on the AI server. To install Open WebUI with Docker, use the commands in Listing 1. After the install, access to the web interface is initially unencrypted by http://<IP address>8080 . If you want to use SSL later, you can set this up on your web server. The first step after the installation is to log in to the Open WebUI.

Listing 1

Open WebUI Docker Install

sudo apt-get update sudo apt-get install ca-certificates curl sudo install -m 0755 -d /etc/apt/keyrings sudo curl -fsSL https://download.docker.com/linux/ubuntu/ gpg -o /etc/apt/keyrings/docker.asc sudo chmod a+r /etc/apt/keyrings/docker.asc echo "deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/ubuntu $(. /etc/os-release && echo "$VERSION_ CODENAME") stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null sudo apt-get update sudo apt-get install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin sudo docker run -d --network=host -v open-webui:/app/backend/data -e OLLAMA_ BASE_URL=http://127.0.0.1:11434 --name open-webui --restart always ghcr.io/ open-webui/open-webui:main

Customizations

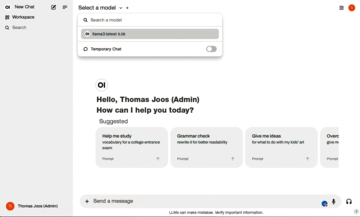

After logging in to Open WebUI, you can access the settings by pressing the user icon, where you can adjust, for example, the language, the design, the interface, and the options for language-to-text and for saving and importing chats. Under Select a model , select the default model (Figure 1) and specify which of the downloaded LLMs are available in your environment.

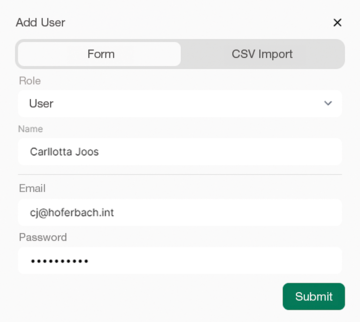

Clicking on the plus sign gives you access to the function for creating new user accounts and roles. In larger environments, you can add new users under CSV Import (Figure 2). If the Enable New Sign Ups option is enabled in the Admin Panel under Settings | General , new users can log in independently. The Default User Role lets you define which role new users are given automatically. This role can be adjusted later in the user management feature by selecting Users | Overview . If the role is pending , an administrator needs to approve access by assigning a role after a user has registered.

Connecting Ollama to the Internet

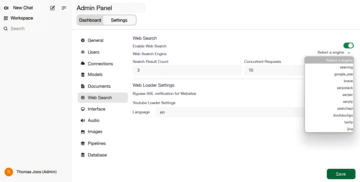

By default, the server does not access the Internet. To change this, you can select Enable web search and choose a Web Search Engine in the Admin Panel under Settings | Web Search to specify whether and how the AI service will search for information on the Internet. The duckduckgo example is just one of many search engines available (Figure 3). Numerous other options under Settings allow customizations of the Ollama AI services.

Figure 3: If you want the LLM to be able to access the web, you need to modify the web search settings.

Figure 3: If you want the LLM to be able to access the web, you need to modify the web search settings.

You can also connect your AI server to external AI services (e.g., to OpenAI) under Settings | Connections in the Admin Panel. You need to enter the API and the key for the connection. Although all these settings are optional, you should generally go through them after the installation and adapt them to your requirements.

« Previous 1 2 3 Next »

Buy this article as PDF

(incl. VAT)

Buy ADMIN Magazine

Subscribe to our ADMIN Newsletters

Subscribe to our Linux Newsletters

Find Linux and Open Source Jobs

Most Popular

Focus On Self-Hosting

• Self-Hosted PaaS with Coolify

• Build and Host Docker Images

• Self-Hosted Pritunl VPN Server with MFA

• Self-Hosted Chat Servers

• Self-Hosted Remote Support with RustDesk

Support Our Work

ADMIN content is made possible with support from readers like you. Please consider contributing when you've found an article to be beneficial.